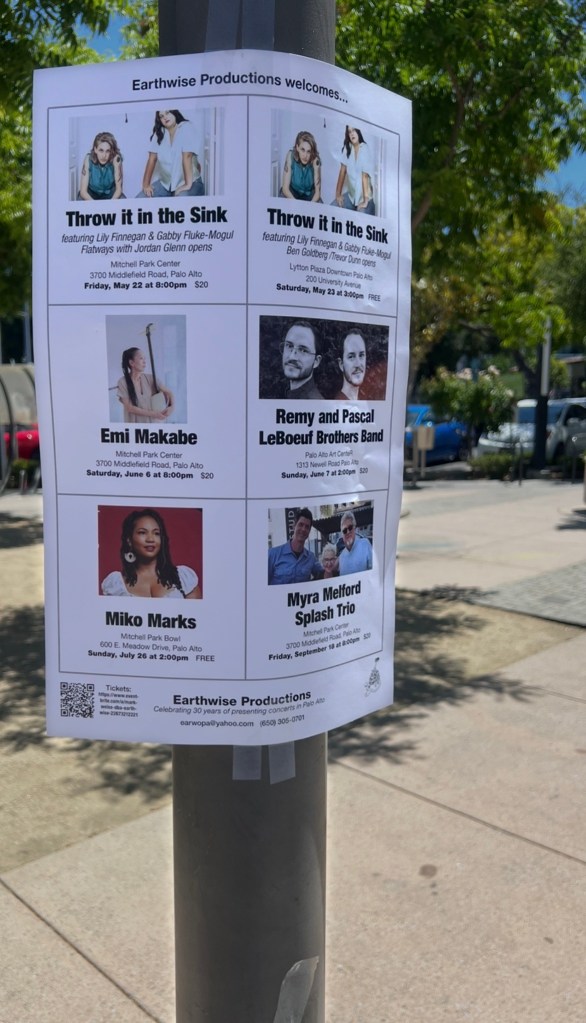

TONITE AT MITCHELL PARK CENTER, TOMORROW AT LYTTON PLAZA

Dramatis personae:

Lily Finnegan

gabby fluke-mogul

Jordan Glenn

Sudhu Tewari

Matt Robidoux

Scott Amendola

Trevor Dunn

Michael Coleman

Ben Goldberg musicians

TONITE AT MITCHELL PARK CENTER, TOMORROW AT LYTTON PLAZA

Dramatis personae:

Lily Finnegan

gabby fluke-mogul

Jordan Glenn

Sudhu Tewari

Matt Robidoux

Scott Amendola

Trevor Dunn

Michael Coleman

Ben Goldberg musicians

I just noticed that the illustration on the cover of this week’s metro for Cinco de Mayo is by rayos Magos the artist who sold me this T-shirt

May

May 15 Caroline Davis Julian Shore Chris Tordini Tim Angulo M 8 pm

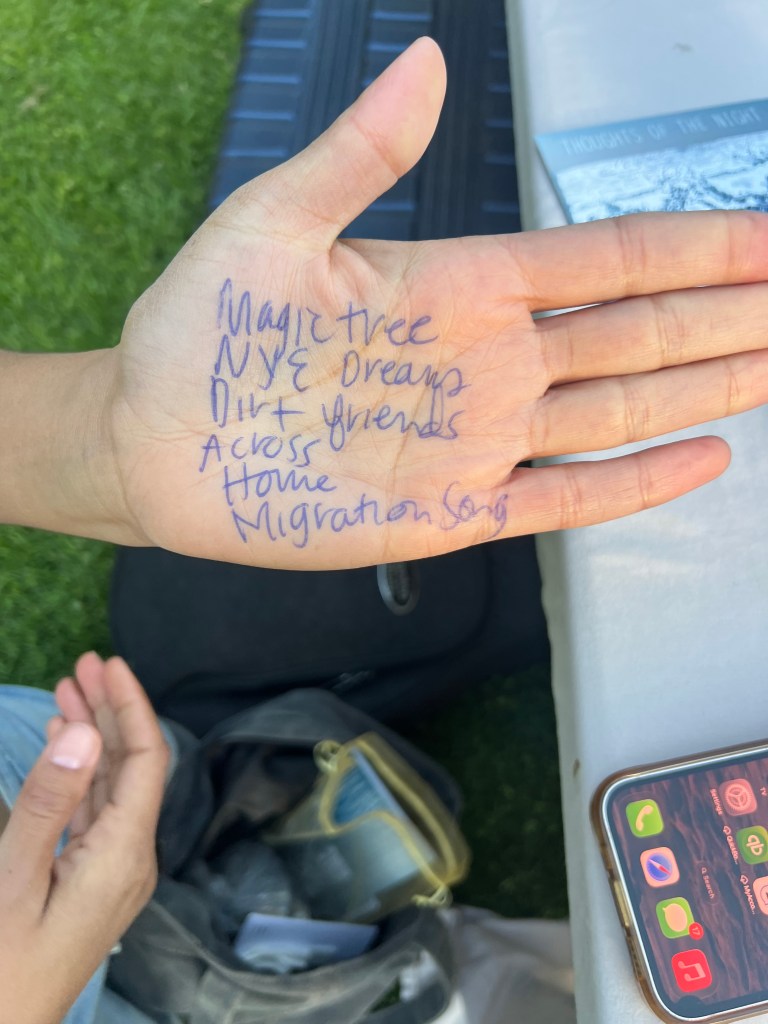

May 22 Throw it in the Sink Lily Finnegan gabby fluke-mogul, Flatways Jordan Glenn Sudhu Tewari Matt Robidoux M 8 pm

May 23 Throw it in the Sink Lily Finnegan gabby fluke-mogul, Scott Amendola Trevor Dunn Michael Coleman Ben Goldberg L 3 pm

June 6 Emi Makabe Thomas Morgan Kenny Wollesen Vitor Gonçalves M 8 pm

June 7 Remy LeBouef Pascal LeBoeuf Ben Flocks Martin Nevin Christian Euman P 2 pm

July 26 Miko Marks MPA 2 pm

Sept 18 Splash Myra Melford Michael Formanek Ches Smith, Tammy Hall Leberta Lorál M 8 pm

October 2, 3 Hannah Marks Nathan Reising Steven Crammer Jonathan Paik, Vinny Golia M 8 pm

October 25 Adam O’Farrill LS 2 pm

November 8 Brandon Woody M 2 pm

J = Johnson Park (free)

L = Lytton Plaza (free)

LS = Lucie Stern

M = Mitchell Park Community

Center $20

MPA = Mitchell Park Amphitheater (free)

P = Palo Alto Art Center $20

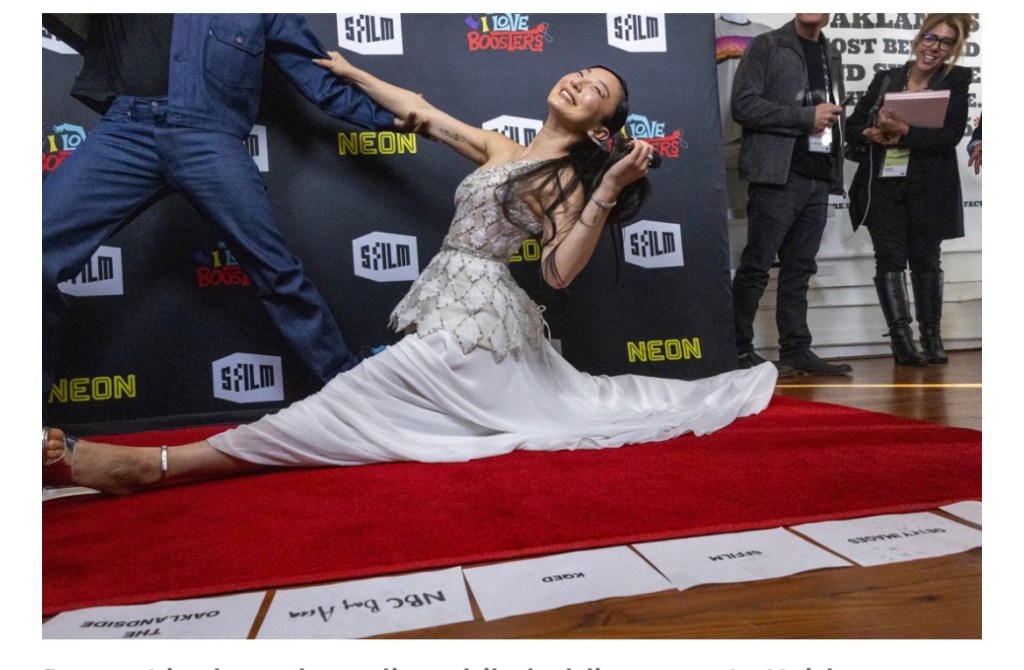

Stephanie Stephane Syjucob is in the chronicle today because she is being honored by the Berkeley. I noticed it was next to Sudosudoku

his new film I love boosters opens next month.

one more reason to see the film is sissy Splitz

I noticed at the Adam Klipple quintet concert on April 18 that someone put a green metal table, with six seats, in the middle of the plaza, where we normally site our bands. We had to move the stage 50 feet to the south then tilt it towards the fountain, where people sit watch and listen. We had produced 40 previous events with the previous park configuration. I spoke to this point at tonite’s Parks commission meeting. Chair Amanda Brown in her questions to staff seemed to imply that the bench was installed by a “Friends of” group without commission or council input.

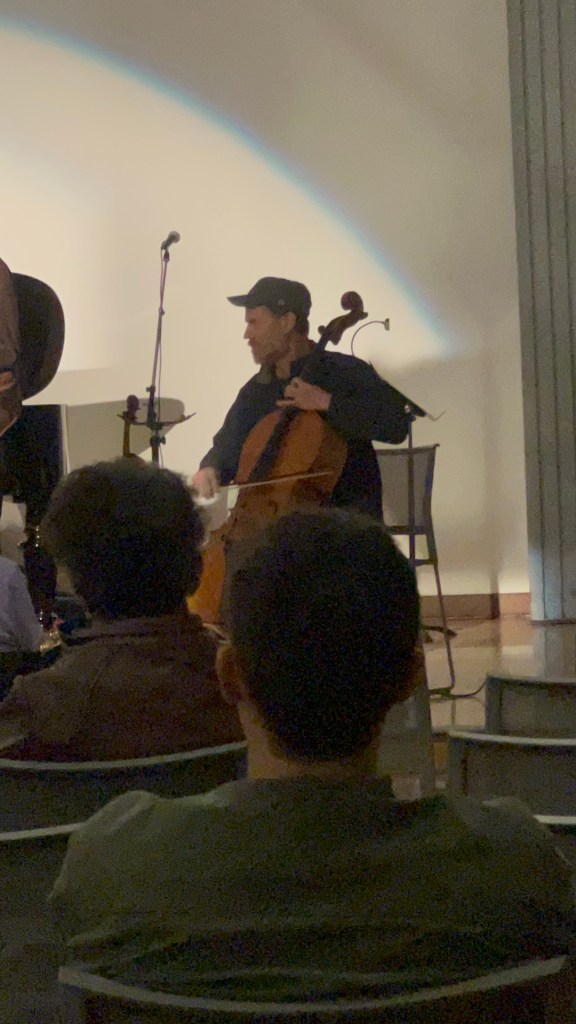

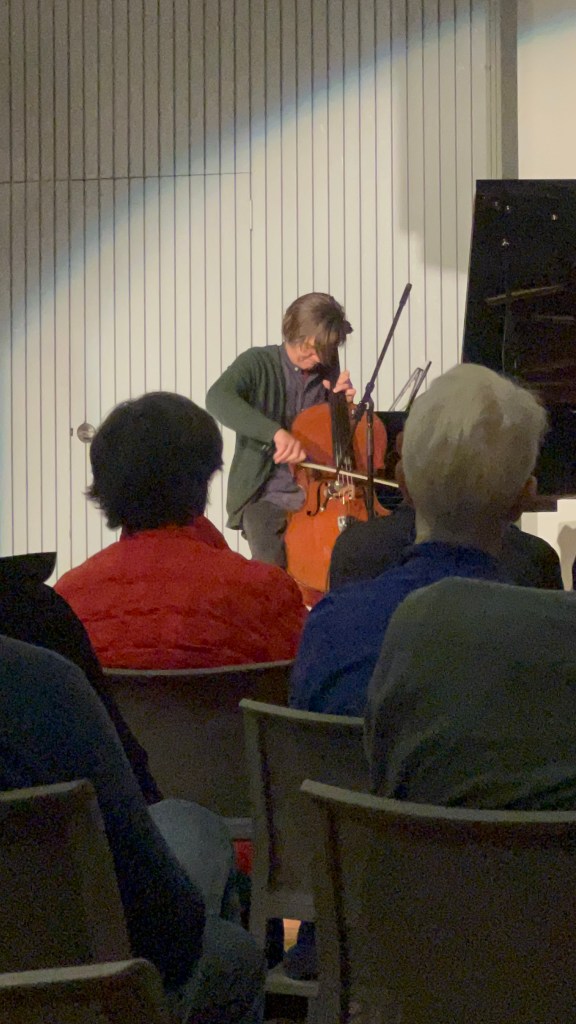

Here is a picture of Stephan Crump from 2022 that shows the normal position, relative to the power outlet/plow and fountain.

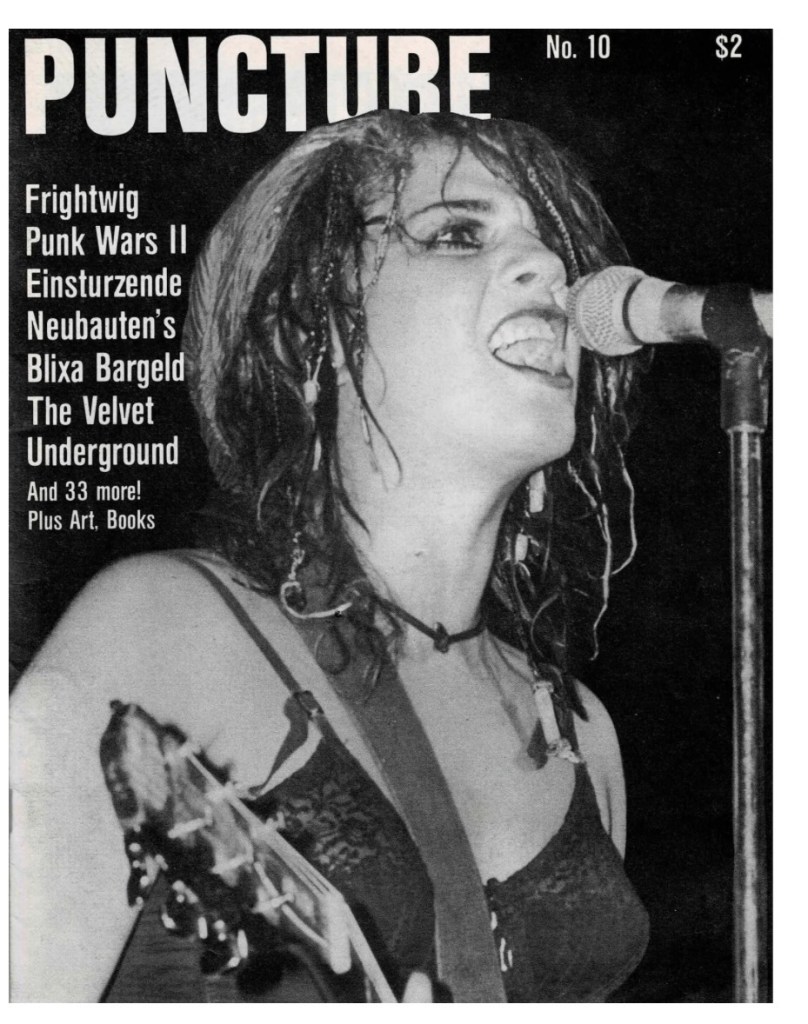

My classmate Mia D’Bruzzi according to her website made 51 musical appearances in 2025 ranging from solo acoustic at farmers market to touring with one of her bands to reunion shows with fright wig

photo by Solange Sylvain